So since WebGL first started appearing in browsers it's been people's natural instinct to create a 3D canvas that fills the entire browser window. Obviously this is because we like our 3D games to run full-screen (or as close to it as we can get). But you'll notice that I usually have my demos run in a window (usually 854x480). The reason for this has traditionally been because when WebGL was still gaining steam there was a severe performance penalty that was directly related to the size of your canvas. (See

this early thread for a good idea of what I'm talking about)

Of course, things have improved on the browser side, and computers are always getting faster so this problem isn't as noticeable any more, but that doesn't mean it has disappeared. Netbooks/Chromebooks/etc are still popular, and don't have a lot of muscle. WebGL-capable mobile devices and tablets probably aren't too far off either. (N900 anyone?) For these environment, it would be great to preserve that fullscreen feel (

especially on mobiles!) but still maintain a reasonable framerate (hopefully ~30fps or more.)

Since the dawn of 3D games we've had the ability to render at a lower res than your monitor is capable of and still have it fill the screen. Gamers are often willing to deal with some jagged edges to get smoother gameplay (but not many want to play in a window the size of a postage stamp.) So, is this an effect that we can emulate on the web? As it turns out, yes! I was playing with just such a situation a couple of days ago and stumbled on a great little hack.

The idea is simple: Create the WebGL canvas at a lower res (say, half width and height), and use CSS3 transforms to scale it to the full browser size. The code snippet is pretty simple:

// Create a WebGL canvas at half the document size

var canvas = document.getElementById("glCanvas");

canvas.width = document.width/2;

canvas.height = document.height/2;

And apply the following CSS style to the canvas element:

#glCanvas {

/* Anchor to the upper left */

position: absolute;

top: 0;

left: 0;

/* Scale out 2X from the corner */

-webkit-transform: scale3d(2.0, 2.0, 1.0);

-webkit-transform-origin: 0 0 0;

}

And done! Everything else works just like your standard WebGL app! In my experience, there is a small performance hit for the upscale (and yes, it interpolates), but it's nowhere near the performance hit of rendering everything at twice the resolution. On one slower machine I tried the fullscreen render was running at 6 fps, the half-sized render was going at 20 fps, and the half-size upscaled was getting about 16 fps. Not bad numbers overall!

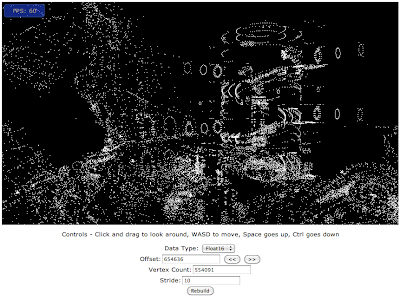

As a proof of concept, I retrofitted the technique onto my Quake3 demo, which has a new variant here:

Full Screen Quake 3 (Touch enabled)I've also taken the time to add some basic touch controls to the demo, since this technique will probably benefit mobile devices most as they gain WebGL capabilities.

- One finger drag: Look around

- Two Finger drag: Move/strafe

- Three Finger tap: Jump

A small caveat for this demo is that the canvas will

not scale to fill the window dynamically as you resize, but that wouldn't be too hard to add. Still, it's really cool to put your browser in fullscreen mode and see corner-to-corner WebGL running at a decent speed on most any device!

So now the fun part: What's the coolest device you can get this sucker to run on?