I'm really blown away by the response to the video of my RAGE WebGL demo! It's been getting a lot of word of mouth on Twitter and it's been really fun to follow what people have been saying about it on sites like reddit. And most of what I've been hearing is positive too! Which is awesome... mostly...

..except I think people don't really understand what they're seeing. I'm getting a lot of credit for doing an awesome WebGL demo, and certainly I'm proud of it, but the fact is that the real work done on my part was reverse engineering the format. Once that was done the rendering was pretty trivial. And if the video looks awesome then ALL of the credit for that goes to id Software's incredible art and tools teams! The fact is, outside of some careful management of the textures this project pales in comparison to the complexity of, say, my Quake 3 demo. Of course, to a certain degree that was the point of this whole exercise...

I'll talk about the rendering in a moment, but first I want to back up and talk about why I chose to do this project and how I figured out the format in the first place, since that's the bit that I found the most interesting. If you don't care much about that aspect, skip down to the "Rendering details" section. I'll warn you now, this will end up being quite a bit longer than the Quake 3 tech post since a lot of it will be stepping through my thought process. Reader beware...

Choosing Rage

So, obviously I have an affinity for the id formats. This, combined with seeing a working virtualized texturing implementation in WebGL got me thinking about how feasible it might be to render a level from RAGE when it came out on the PC. Obviously the game not being out yet put a damper on that, and there's a lot of technical issues to be considered, but the desire has always tickled at the back of my mind.

When I was asked to speak at OnGameStart about my Quake 3 demo, I knew immediately that I wanted to build a new demo that I could show off alongside it, and I wanted it to be a format that was better suited for the web. Quake 3 makes for an impressive "look what I can do" demo, but it relies pretty heavily on the assumption that all of your resources reside on a local disk, and that the player doesn't mind waiting for the whole level to load before doing anything. It also assumes that it's faster to cull and render many small batches of geometry instead of pushing large batches to the card at once. These are not very web-friendly assumptions. And to top it off it's a first person shooter, which means that the controls are basically impossible to do nicely in Javascript.

A good web-oriented format is one that can have small chunks streamed down to the player at a time while they navigate the environment so you aren't stuck looking at the "loading screen of doom" for several minutes. Said format would also be built around the idea of changing state as little as possible, rendering geometry in large batches, and making geometry culling as trivial as possible. Ideally it would also be designed with a control scheme in mind that didn't grind against the nature of a web page (ie: require total mouse control).

It took a couple of days, but at some point it suddenly dawned on me that, based on everything I had read about it from various interviews, RAGE on the iPhone seemed to meet a lot of that criteria! Plus, it was built for a mobile device so I knew it wouldn't be too outrageous in terms of performance requirements. And, yeah, it wasn't "the" RAGE that I had been thinking about, but it was pretty close. I knew I had to at least give it a try! So I asked a friend that owned the SD version of the game if I could rip some of the resources from his copy to play around with. (I later bought a copy of the HD version) The files in question were pretty easy to find (SD_RageLevel1.iosMap/Tex) and so within a few minutes I had all of the required files to pursue my new pet project...

...and no freaking idea what the files contained.

Figuring out the Format

Well, yeah, I knew they contained a map and textures respectively, but how those were formatted was anyone's guess. Unlike many of id's past games there's no community documentation of these formats (well, NOW there is...) so I was left with nothing but some assumptions based on what I had read and seen. Amazingly, many of my assumptions proved to be correct or at least pretty close. (I got lucky on that one.)

- Since performance was obviously a concern, I didn't think the files would be encrypted or intentionally obfuscated (beyond, you know, being binary)

- It seemed reasonable that some elements of the Quake BSP files (like the basic lump structure) would carry over to this format

- I knew that the textures were 2BPP PVR, based on interviews that Carmack had given about the game, and I assumed they would be in a easy-to-find and easy-to-parse form to assist the speed of rendering

- I assumed there would be a player path stored in there somewhere, and that it would have visibility and texture information associated with it

- And finally, I assumed that SOMEWHERE in that big ol' lump of 1s and 0s there would be a list XYZ coordinates

That last assumption seemed like a pretty reasonable place to start, so I got busy trying to figure out where those coordinates were.

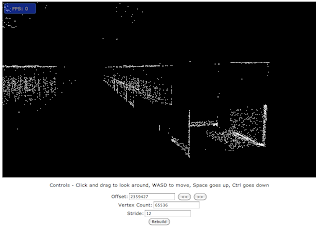

To that end, I grabbed my WebGL sandbox and hacked up a quick and dirty form that let me specify an offset into the file, a byte stride, and a element count and started brute-force stepping through the file to see if anything looked sensible. I got an awful lot of this for a while:

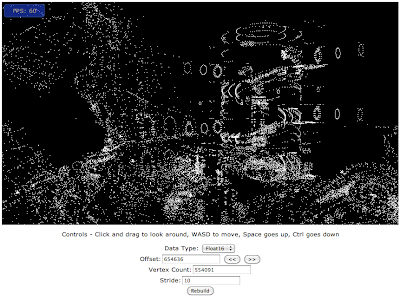

The problem ended up being that I assumed those XYZ values would be 32 bit floats. After a day or so of not getting any results at all, it suddenly dawned on me "Hey! This is built for OpenGL ES, maybe they're using something more compact." My first instinct was half-precision floats, but that didn't give me much better results. Eventually I tried parsing the values as shorts, and after a little bit more browsing...

Hallelujah! Now THAT looks like a level!

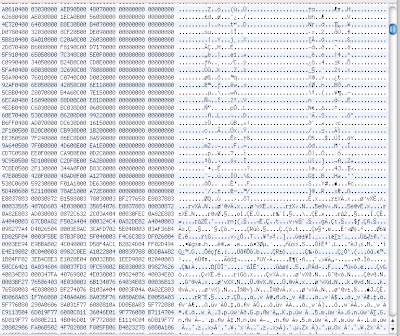

So now I knew that the vertex array consisted of 5 shorts - An X, Y, Z position and what I could only assume was a texture coordinate for the last 2 values. That was great info, but I didn't know where the vertex list started or how long it was. For this, I started looking at the file in a hex editor. On a quick scroll through it was easy to see that there were several distinct sections of "patterns" in the hex and ascii data, so it should be pretty easy to pick out where one section ended and another began. I started by jumping to the offset that I had hit in my visualization tool and tracing backwards looking for a change in the general "look" of the data.

And I certainly found it, sticking out like a sore thumb. As I said earlier, I assumed that this format would be similar to the other id formats in how it indexed it's lumps, so I guessed I would find something near the top of the file that pointed me at this spot. I took the byte offset of that "border" and did a search through the file for anything that matched that value (427432), and found a match 144 bytes in. That sounds like a header to me!

Next I traced my way down through the vertex values till I found another "border" (this one was harder to spot, but still visible), and calculated the size in bytes of that lump. (4224760) I tried finding that value near the top of the file, but failed. I did, however, find 422476, which happened to be the byte size of the lump divided by 10, which I knew to be the size of each vertex. It seemed very likely that this represented the element count for that lump.

I played around with some more of the numbers near the top of the file and pretty quickly was able to match up a list of offsets, element counts, and element sizes for 5 different lumps. There was a mystery 6th chunk of the file that none of these header values seemed to reference, but I could figure that out later. My next task was to figure out what each of my newfound lumps represented.

The first I already knew to be vertices. The second I found I could guess at pretty easily. Looking at the values a very distinct trend began to emerge. Almost all of the values looked like this:

1,2,3 4,3,2

That may seem like gibberish, but most graphics developers would probably recognize that as a familiar pattern. When indexing the faces of a square, you build two triangles. These triangles need to keep the same winding (counter clockwise or clockwise) for face culling to work, so you almost always store your indicies for a square face in the above pattern. And this lump was FULL of that pattern! It didn't take too much to determine that this lump was an array of unsigned shorts that represented indices. (And that fit very well with OpenGL ES, which can't accept anything larger than an unsigned short for indices.)

So now equipped with my list of verts and my array of indices, it seemed like I should be able to start rendering triangles, right? Well... sort of.

If I only rendered the first few hundred indices as a triangle list I got what looked like very sensible geometry. After around 300 or so, however, the triangles started to criss-cross the map in weird and very obviously wrong ways. And really, this made sense. Since the indices were unsigned shorts, the maximum value they could hold was 65535, but we had 422476 vertices! We simply couldn't index them all unless we were using an offset of some sort.

So now it was time to get creative. I knew in order to reach all of the vertices my offsets would have to be stored as longs, so I started parsing values from all the remaining lumps as longs and then watching the min and max values as I went. What I was looking for was values that did not dip below 0 and did not exceed what I knew the vertex and index counts to be. Any values that fell outside of that range couldn't possibly be my offsets. As fortune would have it, I found those values in the first and third longs of each element in the third lump. The first value matched very nicely with the vertices, and the second match very nicely with the indices.

(Side note: I later discovered that the second and third values of that lump represented the index and vertex counts, so I could have actually started rendering things right here, but for some reason that never occured to me.)

On the assumption that these were my offsets, I then went looking for something else that would reference them. Using my min/max trick again, I determined that the first long in the fourth lump matched the offset element count exactly, and the following two longs were typically nice, low numbers. I took a wild guess that one of these would be a vertex count and started playing around with it. It took a few tries, but eventually I hit on the sweet spot and discovered that the second value was actually an index offset to be applied on top of the previously discovered offset, and the third value was the vertex count. And now... we had solid walls! I had found my meshes.

Now, all of that happened over the course of three afternoons worth of tinkering, and things slowed down a bit after that so I'm not going to bore you with all the details and just hit the high points. I figured out that the last lump started with 7 floats just by observing that they're values were reasonably sane in the hex editor (by sane I mean nothing that got big enough to trigger scientific notation, no NaNs, etc.) I also observed that the mins and maxes of those elements tended to be within the range of a short or between -1 and 1. It struck me a day later that these values probably represented my path and orientation through the level, and some quick renderings of the values proved my hunch correct.

I figured out that the path elements also contained offsets into the file and element counts simply be looking for values that referenced the aforementioned "missing lump", and once again using my min/max tracking discovered that the list of values they pointed to corresponded with the mesh lump elements. It was pretty obvious from there that these were meant to be the meshes visible from each point along the path.

The part where I really got hung up was the textures.

Getting the textures out of the massive .iosTEx file proved easier than I initially thought. As I mentioned earlier, I knew that the textures were 2BPP PVR based on an interview Carmack had given and blog post he had written. Using the PVRTextTool I was able to open the texture file as a "RAW" file with the appropriate compression format and visually determine that the textures were stored as 1024x1024 tiles. I saved one of these tiles off to disk to see what the file size would be. (327732 bytes every time. PVR is a fixed rate compression scheme.) I then divided the total texture file size by that number (minus the PVR header size) to get the number of textures in the single file. I then wrote a quick python script to extract out each chunk of 332KB and stick a predetermined PVR header on them and in no time flat I had a folder full of individual PVR files.

Of course, PVR files don't do you any good in a browser, so I had to convert them into JPEGs. That, surprisingly, proved to be quite the challenge since apparently nobody ever bothered to write a command line converter to get images OUT of the PVR format, only in. I eventually wrote up a quick and dirty one myself using the PVR and JPEG C libs. Long story short, I got all the images in a usable format.

I had no idea how they matched up with the meshes, though. I think the thing that threw me was that I identified pretty quickly that an array of 16 shorts in the path elements lined up nicely with the texture count. As such, I thought that those 16 textures were the only ones used at that point of the path, and I kept searching for a value that tied each mesh back to one of those 16 textures. I got stuck on that one problem for nearly a week before throwing my hands up and (as I said in the video) going to Carmack for help.

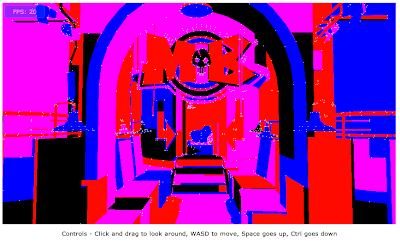

Based on his reply, I took another look at the values I had already parsed and realized, to my shock, that the number of "offset" elements in the map ALSO exactly matched the number of textures! In fact, beyond being simply a list of offsets these elements were implicitly the texture index for every mesh that used them! That discovery made, paired with the realization that the 16 textures on each point represented upcoming textures (for streaming), not ones currently in use, tied up all the loose ends for me. The final trick was (after some experimenting) determining that the texture coordinates were read in as shorts that needed to be "unpacked" into a 0.0 to 1.0 range float to line up with the textures correctly. Within a day I had my first glimpse of a fully textured map.

At that point all that remained was to do some rendering optimizations and tweak the controls a little and then it was off to YouTube for all of your viewing pleasure. Obviously that's the reader's digest version of it all, but hopefully it gives you some insight into the reverse-engineering process that went into this demo.

Rendering Details

Compared to figuring out the format the rendering process for these maps practically handles itself, which is a massive credit to the individuals that designed the format and the tools that power it. Everything is packed in a manner that lets you change state as little as possible, and effectively forces you to render only the geometry that is visible from where the player is standing.

The central mechanism to the rendering is actually the path that the player follows. Each point along the path contains a position and quaternion (for orientation), and movement along the path is done by interpolating between the positions and orientations at the desired speed.

The path points contain a list of up to 16 texture IDs that will be visible from an upcoming point (but not this one), and that should start being streamed into memory. These "upcoming textures" tend to be repeated by several points in a row, each time moving closer to the front of the list, so you are given a bit of breathing room to get everything loaded and ready. The header file has a value that indicates the maximum number of textures that may be visible at once, so you can pre-allocate that many textures (plus at least 16 for the upcoming ones) and then swap texture data in and out of that static set for the remainder of the level. I can say from experience that having these statically allocated gives a pretty significant performance boost in WebGL and reduced the amount of texture "popping" that I saw on a walkthrough.

Each point also contains a list of geometry that is visible while standing on that point (or standing between that point and the next one). This list has been pre-sorted by texture and vertex buffer offset (which are the same data structure, if you recall from the parsing notes), so binding of state is kept to a minimum.

Every mesh in the level consists of nothing more than position and texcoord data and the texture to apply. All lighting is baked directly into the textures (as opposed to the lightmaps used by previous id games), which makes a lot of sense when you consider how everything in the world is uniquely textured.

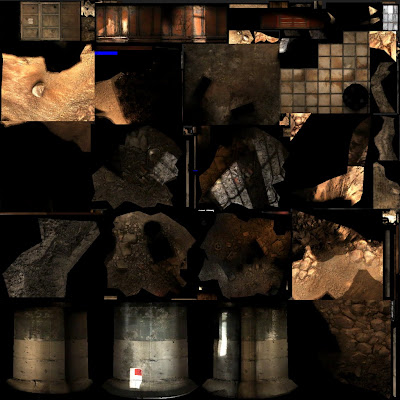

We're still getting RAGE-style virtualized textures, in that objects only use the texture space needed based on their size on screen. The difference is that rather than determine the texture levels needed dynamically like the full PC version of RAGE will do, all of the texture levels here are pre-computed based on the path you walk. The textures themselves are actually more accurately called "texture atlases", since they almost always contain textures for multiple objects at varied distances. Here's a good example, to get an idea of what they look like:

Most meshes in the level are stored multiple times, with the same geometry and different texture coordinates for each instance. This eats up more space, certainly, but saves you from needing to do any calculations at run time to determine how to line the mesh and texture align. So to render any given mesh you bind the texture, bind the vertex buffer at the appropriate offset, and then render starting at the given index. Done. No fancy shader tricks, no special effects, no weird state mangling. Just texture and draw.

This is why I give so much credit to the artists at id. I've seen a lot of comments about the video I posted claiming it was "The most impressive WebGL demo they had seen so far", which actually makes me laugh a bit because almost every other demo I've seen is graphically more complex in some way. Even my Quake 3 demo is far more interesting on a technical level than this one. What it highlights, though, is that your graphics don't NEED to be Crysis level, shader heavy monstrosities to look good. Art direction is SO much more important than the technical workings of the engine.

So that it! I feel like after all the attention I've been receiving some people are going to feel like I just pulled away the curtain and revealed, not a wizard, but a simple old man. Sorry if this explanation makes anything seem less magical somehow, but I still think it's an incredible feat on id's part.

I highly encourage anyone that has enjoyed this demo to buy and play RAGE on their iDevice, it's a fun little game and I always find it more interesting when I know what's going on below the hood. I'm happy to answer any questions you guys might have about the format (that I can, anyway) and look for my javascript source to be posted later this week!